00:00:00,000Great, thank you. I’m very, very thankful to be here. Every event that people show up at during a pandemic is one that’s I’m00:00:10,740 00:00:10,740very grateful to be a part of because I know how tough a lot of these are. I also want to thank everybody here for inviting me to speak00:00:18,450 00:00:18,450and also for Molly for being an excellent co-speaker — I’ve seen some of the work that she’s done before, and it’s really amazing.00:00:27,150

00:00:27,150My role here today is really to provoke some initial thoughts. It’s a bit tough. As you can see here, I want to give a content warning,00:00:34,920 00:00:34,950warning for images of natural disasters, transport accidents, political unrest, conspiracy theories, and racism. Sounds like00:00:41,910 00:00:41,910it’s very heavy, and indeed, part of it is, but really what this is about is challenging a set of belief systems in a way that I think is quite00:00:50,340 00:00:50,340provocative. And what I’m hoping is that over the next couple of hours together, we’re going to have a, move through a situation where we move00:00:59,520 00:00:59,520beyond just thinking of design ethics as a singular kind of, or from a particular kind of track. So, that’s the content warning for today.00:01:09,510 00:01:09,510There’s nothing like particularly, it's a little bit confronting, but it’s nothing like extremely in your face. Before all of that though, I hope00:01:21,300 00:01:21,300you indulge me in a short video.00:01:25,440

“Alexa, STOP!”

00:01:32,700Young Child: Hey, Alexa? Play “Digger Digger!”00:01:45,270

00:01:47,340Alexa: I can’t find the song “Shake It Took Her”00:01:49,290

00:01:51,330Father: Say “Alexa...”00:01:51,720

00:01:53,610Young Child: Alexa? play “Digger Digger!”00:01:57,390

00:01:57,990Alexa: I can’t find the song “Too Good Tucker”00:01:59,970

00:02:02,760Young Child: Alexa? play “Digger Digger!”00:02:13,950

00:02:15,240Mother: Bobby, can you tell it to play...00:02:16,380

00:02:16,650Alexa: You want to hear a station for porn detected. Porno ringtone hot chick amateur girl—00:02:21,330

00:02:22,050Parents: No, no no no!00:02:24,210

00:02:24,210Alexa: —pussy anal dildo ringtone—00:02:25,080

00:02:25,000Father: Alexa, STOP!00:02:26,260

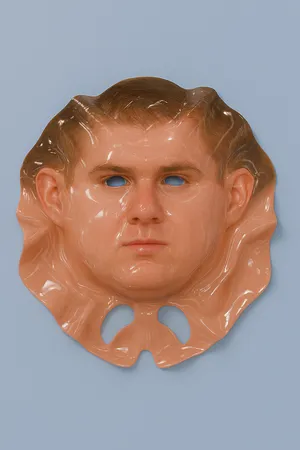

00:02:27,800I’m fascinated by things like this, when the veneer of technology and design falls away, what we’re left with is the exposed and00:02:35,630 00:02:35,630often ugly guts of our collective decision making, our biases, and our shortcomings. This little example here is this paradox of a00:02:45,680 00:02:45,680designed system, right? Like, it’s a cute Kodak moment destroyed when the interface facade falls away and a tiny robot explodes in a tirade of00:02:53,240 00:02:53,240obscenity to the small, bewildered child while this father pleads with the device to stop it.00:02:58,940 00:03:00,230The moment is really absurd, but it’s also kind of banal, because the Alexa isn’t actually malfunctioning. And yet, if any one of us were00:03:08,180 00:03:08,180to walk up to a kid and shout a stream of obscenities in their face, we’d probably go to jail.00:03:14,210

00:03:15,410This clip is a perfect encapsulation of what we’re trying to understand at New Design Congress. In popular discourse, we often talk00:03:22,460 00:03:22,460about living in the darkest timeline, and for the last few years, especially it feels that way. We’ve been learning about the interplay00:03:29,600 00:03:29,600between the work of designers and the world around us and how it leads to these kinds of absurdities.00:03:34,160 00:03:34,610Today, I want to take a moment to share some of these observations. And then with Molly, and the work that we do together, collectively, I guess,00:03:42,170 00:03:42,170reflect a little bit on some of these threads.00:03:45,140

00:03:46,640“Even though we feigned this whole line of like, "there probably aren’t any really bad unintended consequences," I think00:03:54,020 00:03:54,020in the back deep, deep recesses of our minds, we— we kind of knew something bad could happen. But I think the way we defined it was not like00:04:04,370 00:04:04,370this. It literally is at a point now, where I think we have created tools that are ripping apart the social fabric of how society works.00:04:14,150 00:04:14,480That is truly where we are. And I would encourage all of you as the future leaders of the world to really internalize how important00:04:23,390 00:04:23,420this is. If you feed the beast, that beast will destroy you.”00:04:27,500

00:04:27,870In 2017 at an event[1] held by the Stanford Business School, a former Facebook exec Chamath Palihapitiya made these international00:04:35,160 00:04:35,160headlines by expressing a seemingly rare and frank level of remorse at his role building Facebook. His central belief is pretty stark:00:04:42,900 00:04:42,930through a mix of good intentions and feelings of trepidation, Facebook’s unethical design now serves as a cause of disinformation, polarization00:04:51,510 00:04:51,510and addiction.00:04:52,530 00:04:54,000This is reflected in other organizations work too such as Tristan Harris's Center for Humane Technology — who argues that the00:05:01,620 00:05:01,620ills of modern society (addiction, misinformation, polarization, again), all these traits have an unethical approach to designing interfaces and platforms.00:05:10,140 00:05:10,140They argue that using Dark UX patterns and addictive design causes a form of “human downgrading,” that is pervasive in all00:05:19,500 00:05:19,500of our technology, and it reducing a person’s potential to be their best.00:05:22,800 00:05:23,400At scale, the Center for Humane Technology sees this as this technologically driven existential threat to humanity. These arguments share a00:05:31,710 00:05:31,710common theme, where the decisions made when designing a system or an interface by people like us can directly cause terrible outcomes. I00:05:39,240 00:05:39,720want to think about that a little bit, so let’s look at three brief moments in time through the lens of this design ethics critique.00:05:47,220

00:05:50,070In this video — it’s a viral video actually — an infant is delighted by a smartphone. But, when its parents take the device away from him, the00:05:58,860 00:05:58,860child responds with its horrendous tantrum. I’ve spared the room from the sound but trust me, it’s blood curdling, you can see how loud it is00:06:06,570 00:06:06,570in the child’s face. This is the Tragedy of Innocence, where the systems we have built can reprogram you no matter your age and cause you00:06:15,570 00:06:15,570to develop a dependency on the screen soon after you enter this world.00:06:19,140

00:06:22,650This video shows the disturbingly filthy room of a teenage streamer, and it’s presented in this context where she sits ignoring the camera00:06:31,140 00:06:31,140person in the room with her, you can kind of infer that she spends all this time online by the times around her desk. This is the00:06:38,370 00:06:38,370Tragedy of Youth, in which the systems we have built are designed to trigger addictive vanity and cause you to perform and curate yourself online.00:06:45,720

00:06:45,000In this video, an older woman livestreams herself to a small audience. She’s a member of the right wing Q-Anon conspiracy, and she’s00:06:57,120 00:06:57,120telling her viewers about her latest discovery: That McDonald’s is grinding children up into their food[2] and selling them as Big Macs in00:07:04,800 00:07:04,800restaurants across America. The people in her comments are shocked and cheering her on in this vicious feedback loop. This is the Tragedy of Truth,00:07:13,290 00:07:13,290 in which the systems we have built are designed to push disinformation and cause you to question the world in incoherent or00:07:20,370 00:07:20,370conspiratorial ways.00:07:21,900

00:07:24,090When Palihapitiya and Harris describe the unethical behaviors of technology builders, they are of course referring to outcomes like these00:07:32,160 00:07:32,160and many more. They are members of a growing chorus of of organizations, practitioners, academics and journalists who see these as00:07:39,720 00:07:39,720forces that rip society apart. Intervention must be found through this introduction of design ethics.00:07:45,600

00:07:46,950But will that really solve anything? What if design ethics is not the answer? What if instead, inherent to the design ethics movement00:07:57,360 00:07:57,360is a serious misunderstanding of the role that design systems play in our lives? What if design ethics is a form of reductionism that allows00:08:05,670 00:08:05,670designers to escape the scrutiny of their work as it fails individuals and communities?00:08:11,940

00:08:13,350And, in a time when we have to collectively grapple with the realities of broader lived events, this is what I think is actually the00:08:22,800 00:08:22,800case. And I think this is because all popular frameworks that include design ethics, consider it as a practice based response — a00:08:33,300 00:08:33,300responsibility of an individual or a team to assess their motivations are what they choose to build. And they do this through these two ways:00:08:41,280 00:08:41,370through consent and inclusion is a part of a larger strategy. Here the onus for better outcomes lies at the feet of anyone who touches00:08:48,990 00:08:48,990the design system or app or platform, and they can use these tools to respond in a way that’s more ethical. So, I want to talk about how these00:09:00,180 00:09:00,210influenced us and where their shortcomings might be.00:09:03,480

The limits to ethical consent

00:09:05,070Consent in technology is drawn from an inclusive and progressive politic and it’s set for a foundation to advocate for the empowerment of00:09:11,580 00:09:11,580the individual. In the interface, consent is seen as being involved with frictionless design empowering users through simple language00:09:21,930 00:09:22,200interactions. I don’t mean to single anybody out but there are two good definitions of consent within technology. One is from 00:09:29,160 00:09:29,190Building Consentful Tech[3] by Una Lee and Dann Toliver, and the second is the Society Centered Design Principles[4] by Projects by IF. To quote from both of them:00:09:38,130 00:09:38,160in Building Consentful Tech, consent is described as “freely given without duress and can be withdrawn at any time.” Cultivating00:09:46,020 00:09:46,020consent means “the user must be informed in the process transparent.” Projects by IF describes design as “a political act” and that it’s “our00:09:54,540 00:09:54,540responsibility to design for people’s rights. Privacy is not a luxury for those who can afford it privacy is a human right, and we must00:10:01,620 00:10:01,620create systems that remove the imbalance of power and instead promote equity and citizen empowerment.” Consent in this way is a key00:10:10,950 00:10:10,950framing for all design systems and ethical design itself. So much so that an attempt at an ethically designed to consent system even made00:10:18,960 00:10:18,960it into the European Union’s General Data Protection Regulation.00:10:22,830

00:10:31,000In 2017, the average smartphone user in Europe or North America had 80 apps installed on their device[5], each with their own important00:10:38,890 00:10:38,890use case. They all have a Terms of Service, and more importantly, each app interacted with a broader set of networks of dozens or hundreds or00:10:47,470 00:10:47,470thousands of actors. Expecting a user to maintain an informed agreement with just one wholly unknown, unknowable system is impossible.00:10:55,420 00:10:55,900In this example that I’m showing here, this is a banking system that uses algorithms and machine learning to run the numbers on aspiring middle00:11:06,610 00:11:06,610class individuals' social media content to check whether or not they’re eligible for credit. Informed Consent crumbles at scale, and it sits00:11:16,240 00:11:16,240in direct conflict with this world saturated with platforms and stakeholders. I may have an agreement with Twitter, and I may have consented00:11:24,250 00:11:24,250to Twitter, but however, does this extend ten years later, to the algorithmic analysis of my credit worthiness, like you see here? These are00:11:31,450 00:11:31,450real things that happen today.00:11:32,890

00:11:34,280Withdrawing consent even beyond the ability for an individual to anticipate this is deeply problematic. Disabling Location Services is00:11:44,240 00:11:44,240withdrawing consent, but sharing your location with someone you trust whilst on a date is a key safety technique for vulnerable users[6]. Location00:11:52,430 00:11:52,430Services also help users with mobility or accessibility difficulties navigate the world more easily. To opt out is to reduce your00:12:00,800 00:12:00,800quality of life or threaten your physical safety.00:12:04,130

Inclusion and power

00:12:08,960Design ethics often frames its criticism from a solutions based representation. A problematic behavior is observed, identified as caused by00:12:19,790 00:12:19,790technology and then a solution is sought to address it. The criticism of early facial recognition technology and biometrics stands00:12:28,940 00:12:28,940perhaps is one of the most troubling examples. It’s an example of popular solutionism from the last decade.00:12:38,780

00:12:38,780In this video from 2011,[7] an HP computer with biometric facial recognition ignores a black man’s face, but follows his white colleague’s face. These00:12:48,440 00:12:48,440early biometrics were loaded with homogenous datasets and as a result were unable to detect or respond to non-white features, and the00:12:56,300 00:12:56,300popular discourse at the time rightfully seized upon the racism embedded in these technologies.00:13:01,760 00:13:03,350As a result, these technologies are now more normalized and more resilient. In Seattle, shoppers can walk into Amazon stores with no00:13:11,330 00:13:11,330cashiers and no payment terminals, pick up the items that they want and leave. This is facilitated by the Amazon Go[8] system, which is a00:13:21,050 00:13:21,050set of building wide facial recognition systems.00:13:24,350

00:13:25,340The World Food Program current exploration of blockchain technology, called ‘Building Blocks’[9] is implemented in these00:13:33,200 00:13:33,200atheria and abstract refugee camps. We authorize the transactions using iris scanning or iris biometric technology. The iris scan actually00:13:43,100 00:13:43,100triggers the private key of each beneficiary.00:13:45,680 00:13:45,950[...] So we use that for cross checking but also other services like the WFP [World Food Programme] supermarket.00:13:53,630 00:13:53,630[...] In this case, they could go to one of the supermarkets in the camps and redeem their entitlements.00:13:59,060 00:14:00,180[...] You go and buy something when you need it at the time that is suitable.00:14:04,770

The second order consequences of inclusive activism for biometric and facial recognision systemic biases

00:14:31,510What starts as a criticism of the lack of inclusivity by facial recognition systems leads to two political outcomes, two00:14:41,620 00:14:41,620political worlds each converging by the end of the decade — enabled, but not caused, by technology. What strikes me is that the user00:14:50,860 00:14:50,860experience of these two worlds — the Amazon Go store and the the food distribution facility in Georgia — are almost identical, but the key00:15:03,130 00:15:03,130difference between them is power. It’s not hard to imagine an Amazon Go store expanding to the east coast of the United States, let’s say00:15:11,560 00:15:11,560into an area that becomes a casualty of a big hurricane. When people are destabilized to such an extent, these two examples may be completely00:15:21,700 00:15:21,700indistinguishable.00:15:22,510

00:15:23,830Indeed, the maturation of biometrics has a third more insidious example of facilitating power. The world has been captivated for the last few00:15:32,290 00:15:32,290years by the ongoing protests in Hong Kong with confronting images of an anonymity in numbers against a total surveillance adversary.00:15:40,600 00:15:41,800This is where the true use case for biometrics lies. While we were busy arguing over representation and ethics, biometrics revealed00:15:48,910 00:15:48,910itself to be a tool for facilitating power. Ethical and inclusive facial recognition technology criticism is a total failure. The00:15:58,990 00:15:58,990only defense left is to physically tear it from the Earth.00:16:02,350

00:16:05,060In 2018, researchers at the Association for Computing Machinery produced a study[10] that showed that explicitly instructing computer scientists00:16:12,410 00:16:12,590to consider the organization’s Code of Ethics had no observed effect when compared to a control group. That design ethics is so widely00:16:20,210 00:16:20,210championed means that we must urgently confront the reality that design ethics and its tools are also often flawed, even if it’s goals are noble00:16:27,980 00:16:28,130and are reactions to very, very shocking realities we’ve seen over the past decade.00:16:32,660

00:16:35,030In almost all circumstances, from social net, from the social networks of today, to finance and ecological malpractice, polarization,00:16:43,160 00:16:43,190surveillance and beyond, there are few examples where technology has created entirely new problems. Instead, technology is an accelerant00:16:51,890 00:16:52,490that brings speed and intensity to problems that are already here. And these create more visibility and large amounts of impact to these issues.00:17:02,450 00:17:03,440Furthermore, the mobilisation that we've done so far amounts to a form of solutionism, because we haven’t gone deep into the practice and we’ve00:17:11,060 00:17:11,060instead tried to look at “behaving better,” let's say. The belief that small these tweaks to existing practice leads to more, better00:17:18,680 00:17:18,710ethical outcomes. At a time of profound destabilization, unchallenged practice and limited tools based engagement may fix00:17:26,870 00:17:26,870immediate problems, but allows them to reemerge in new ways.00:17:31,250

The tumbling, intertwining tragedies of the networked world

00:17:31,900So we looked at some of the three examples from Tristan Harris and Palihapitiya. I just want you to look at them again. Let’s look at the00:17:40,540 00:17:40,540Tragedy of Innocence. Despite fitting the narrative of tech addiction in small children, toddlers get attached to to objects and will often throw wild00:17:49,090 00:17:49,090tantrums when these objects are taken from them. But beyond this simple observation, maybe these sorts of images aren’t just examples of00:17:56,920 00:17:56,920addictive interfaces, but a symptom of familial fracture, of time-poor or overworked parents who rely on technologies to stimulate their00:18:06,490 00:18:06,490children. Maybe this is an economic issue, rather than a tech addiction issue.00:18:12,520

00:18:14,440The Tragedy of Youth looks like this social media causing addictive vanity:00:18:18,100

00:18:19,450I lost all of, lost all of my connections to people. So I’m just very lonely a lot. It’s, it’s sad, but that’s just how it is.00:18:33,520 00:18:34,630To be... when you got to make a lot of sacrifices. If you’re not already relationship before you start streaming or during streaming,00:18:41,380 00:18:41,380then it’s really hard to find someone new and you have been outside a very long time.00:18:45,700

00:18:45,730This frank testimony by a popular streamer suggests that this is in fact a symptom of economic precarity in which people with few00:18:53,260 00:18:53,260skills are able to generate income as a sort of middle class celebrity with very little external support.00:19:00,130

00:19:02,830It’s easy to dismiss the Tragedy of Truth as examples of bigoted cranks.00:19:08,260

00:19:09,250Interviewer: She says she so badly wanted to feel like she belonged.00:19:12,730

00:19:13,150Woman: I came to the realization that like instead of accepting that I’m just a generally mediocre person. That group — those groups — make you00:19:20,740 00:19:20,740feel like you are excellent, you are... it! Just for existing for doing absolutely nothing.00:19:27,340

00:19:27,370Interviewer: You’re making it sound like if you’re a member of one of these groups, the qualifications are be white and be a loser.00:19:32,320

00:19:32,650Woman: I can’t really argue that.00:19:33,790

00:19:36,040Perhaps these are symptoms are broader social issues tumbling and intertwining together and emerging in these twisted ways. The00:19:43,900 00:19:43,900Tragedy of Truth lies in a collection of problems: social isolation, lack of mental health services, and real or perceived fear of00:19:52,240 00:19:52,240the political process. In each of these examples, technology is an accelerant of existing desperate problems that we can solve,00:20:00,850 00:20:00,910but only if we look beyond the surface level solutions.00:20:04,510

A future paralysed

00:20:04,930I just want a little bit more time to look at one more thing. Let’s look into the future. The Tesla Cybertruck is the latest in a competitive00:20:14,680 00:20:14,680global effort to build innovative transport. Much like the promise of biometrics, electric cars — often with autonomous driving00:20:22,060 00:20:22,060technologies — are seen as this inevitable reality and some predict their deployment on public roads across the globe in the next ten00:20:28,540 00:20:28,540years.00:20:29,080

00:20:30,880Content warning for a car accident.00:20:33,130

00:20:35,410In this video, a woman is hit and killed by an Uber autonomous vehicle[11]. This horrific incident is described as a software malfunction. Sorry, it’s00:20:48,790 00:20:48,820horrific incident is described as a software malfunction, but it also reignited debate about the morality of software. Should a car choose to00:20:56,170 00:20:56,170sacrifice one person over another in emergency? It’s a serious and difficult question with ethical implications on societies. Cars00:21:05,080 00:21:05,080alter entire landscapes and car infrastructure politics dramatically reshaped cities. For the first time though, these cars must adapt to their00:21:12,100 00:21:12,100environment instead, through software decision making. This event, this accident and the following debate catapulted the ethics00:21:18,580 00:21:18,580of self-driving cars into the public imagination.00:21:21,010

00:21:23,860This is an extraordinary video of people attempting to flee the 2011 Tsunami in Japan, by car[12]. When I think of self-driving vehicles, and00:21:37,120 00:21:37,120in my research, I’ve found that to date there is little publicly accessible research that proposes how a car00:21:45,760 00:21:45,820might behave in a systemic catastrophe. We are on the precipice of a climate disaster with some of the worst bushfires and forest fires we’ve00:21:54,730 00:21:54,730seen all around the world. And yet, there’s little research that exists to describe how a self-driving car could navigate situations in00:22:02,110 00:22:02,110which environmental data just cannot be acted upon. How can a car trained on millions of hours of traffic data respond to an ever changing00:22:09,250 00:22:09,250pathway of water and debris, or intense heat and falling burning trees?00:22:14,140

00:22:14,780In aeroplanes, fly by wire technologies disengage in an emergency, relying on the pilot to take over. And, it’s true that it’s likely the00:22:23,330 00:22:23,330only human driven cars will offer that same level of chance of survival in crisis. As this question looms, it’s masked by this discussion00:22:32,000 00:22:32,000of design ethics, of software bugs and the machines moral compass. We’re yet to even begin the discussion of the feasibility of this00:22:39,800 00:22:39,800technology at a systemic level in an era of ecological change. The death of a cyclist is this tragedy, and it’s a symptom — a small00:22:48,470 00:22:48,470representation — of this looming systemic issue. The self-driving car, wrapped in this critique of its ethics, is born from00:22:56,540 00:22:56,540infrastructure that has had a significant impact on the climate, and we should be asking hard questions about the technology. Instead, we00:23:04,550 00:23:04,550argue about the design ethics of a machine that may have no place in our world.00:23:09,500

00:23:12,860Beyond the issues of YouTube’s autoplay distracting kids from their homework, and boomer parents sharing Likes on Facebook — sharing00:23:21,110 00:23:21,110conspiracies for Likes on Facebook — the lived experiences and struggles of users are at odds with how our infrastructure is built and00:23:27,470 00:23:27,470conceived, and how much importance we placed on these outcomes themselves, and I think what I want to end with is this before we open for00:23:35,780 00:23:35,780questions and move to Molly’s work:00:23:37,700

00:23:37,940As we begin this new, precarious decade, we must look at our work with the nuanced attention it deserves — as an accelerant that demands00:23:44,510 00:23:44,510meaningful revision of practice to mitigate. I hope that is what we will get to, tonight. Our work is a part of a greater whole that amplifies —00:23:51,710 00:23:51,860not causes — tremendous upheaval in our lives. All infrastructure is an expression of power. Interfaces and technologies are social,00:24:00,170 00:24:00,260political and ecological accelerants and solutionism at scale prevents true change.00:24:05,570 00:24:07,280From Decolonizing Privacy Studies[13], to understanding design’s central role as a marketization force on our lives[14], all design practice00:24:16,700 00:24:16,730is built today on an unchallenged system whose foundations must be reimagined. We must look beyond the limitations of design ethics, and how00:24:26,270 00:24:26,270the desire to change our practice in these “ethical” ways, seduces us and masks our ongoing trajectories. But if we can change this, we’ll00:24:37,460 00:24:37,460be able to respond to our fullest potential and avert the darkest timeline.00:24:42,320

Ethical Design? No Thanks!

00:24:43,340Design Ethics? No Thanks!00:24:45,350

00:24:44,350Thank you very much.00:24:45,350

Chamath Palihapitiya, Founder and CEO Social Capital, on Money as an Instrument of Change

Chamath Palihapitiya, Stanford Graduate School of Business, 21:48

13 November, 2017 ↩︎A $100,000 Chicken McNugget Triggered a Child-Sex-Trafficking Conspiracy Theory

EJ Dickson, Rolling Stone

3 August 2021 ↩︎Building Consentful Tech

Una Lee and Dan Toliver

2017 ↩︎Global app downloads topped 175 billion in 2017, revenue surpassed $86 billion

Sarah Perez, Tech Crunch

17 January 2018 ↩︎Why women are indefinitely sharing their locations

Rae Witte, Tech Crunch

30 April 2029 ↩︎HP computers are racist

wzamen01, YouTube

10 December 2009 ↩︎Amazon opens a grocery store with no cashiers

Catherine Thorbecke, ABC News

25 February, 2020 ↩︎Building Blocks

World Feed Programme ↩︎Does ACM’s code of ethics change ethical decision making in software development?

Andrew McNamara, Justin Smith, & Emerson Murphy-Hill; ESEC/FSE 2018: Proceedings of the 2018 26th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering, Pages 729–733

October 2018

doi: 10.1145/3236024.3264833 ↩︎Uber self-driving crash: Footage shows moment before impact

BBC News

22 March 2018 ↩︎Weather Gone Viral: Tsunami Car Escape

The Weather Channel

3 November 2016 ↩︎Decolonizing Privacy Studies

Payal Arora, Television & New Media

May 2019

doi:10.1177/1527476418806092 ↩︎Arden Stern & Sami Siegelbaum (2019) Special Issue: Design and Neoliberalism,

Arden Stern & Sami Siegelbaum, Design and Culture, 11:3, 265-277

September 2019

doi: 10.1080/17547075.2019.1667188 ↩︎